PROXY MEMORANDUM

To: Shareholders of Meta Platforms, Inc. (the “Company” or “Meta”)

From: The Anti-Defamation League (“ADL”) & JLens (together, “we”)

Date: April 28, 2026

Re: The case to vote FOR Proposal 8 (“Report on Addressing Antisemitism and Hate in Online Platforms”) on Meta’s 2026 Proxy Statement

We urge you to vote FOR the “Report on Addressing Antisemitism and Hate in Online Platforms” (Proposal 8) in Meta’s 2026 Proxy Statement.

Proposal 8 seeks to address material risks associated with Meta’s management of antisemitism and other forms of hate speech on its platforms, with implications for revenue, costs, and long-term shareholder value. The proposal requests that the Company prepare and publish a comprehensive report detailing the Company’s policies, practices, and effectiveness in addressing antisemitism and other forms of online hate on its platforms and services.

Resubmission of a Critical Proposal

Shareholders voted on a substantially similar proposal at Meta’s 2025 annual meeting (the “2025 Proposal”). The 2025 Proposal was submitted prior to Meta’s January 2025 decision to end its third-party fact-checking program in the United States and significantly reduce its content moderation efforts.[1] As explained in this memo, research has shown that, following these policy changes, the risks identified in the 2025 Proposal, including rising antisemitism and other forms of hate targeting specific communities, have persisted and, in certain respects, may have intensified, which we believe, in turn, exposes the Company and its shareholders to unnecessary increased risks

Based on our calculations, and excluding votes cast by Meta CEO Mark Zuckerberg, the proposal received support from more than 46 percent of the votes cast by independent shareholders, representing among the highest levels of support for a human rights-related shareholder proposal at a U.S. public company during the 2025 proxy season. [2] [3]

Escalating Hate and Platform Risk Exposure

Multiple analyses indicate antisemitic and other identity-based hate across Meta’s platforms proliferates, raising concerns about the Company’s management of content-related risks.

Evidence of Prolific Harmful Content Across Meta Platforms

- Facebook: Following the policy changes, ADL documented a nearly fivefold increase in antisemitic comments per day on the Facebook accounts of Jewish members of Congress, suggesting a more permissive environment for harmful discourse. [4]

- Instagram: Testing by the ADL Center on Extremism found that Instagram removed only 7% of reported extremist and hateful content demonstrating a systemic failure to protect users. Key research findings include: [5]

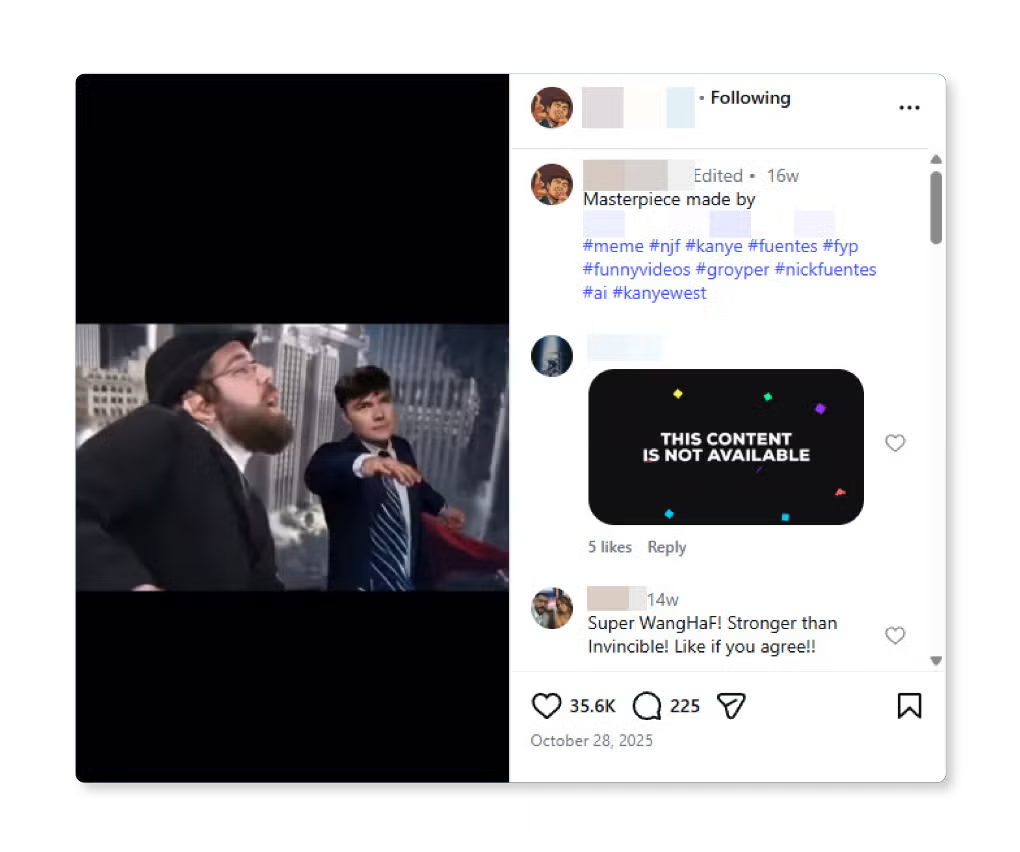

- 105 accounts affiliated with white supremacist Nick Fuentes’ Groyper network, collectively amassing more than 1.4 million followers, which regularly posted antisemitic conspiracy theories, Holocaust denial, and pro-Hitler content, including content created by Fuentes who was purportedly banned from Instagram. (See appendix)

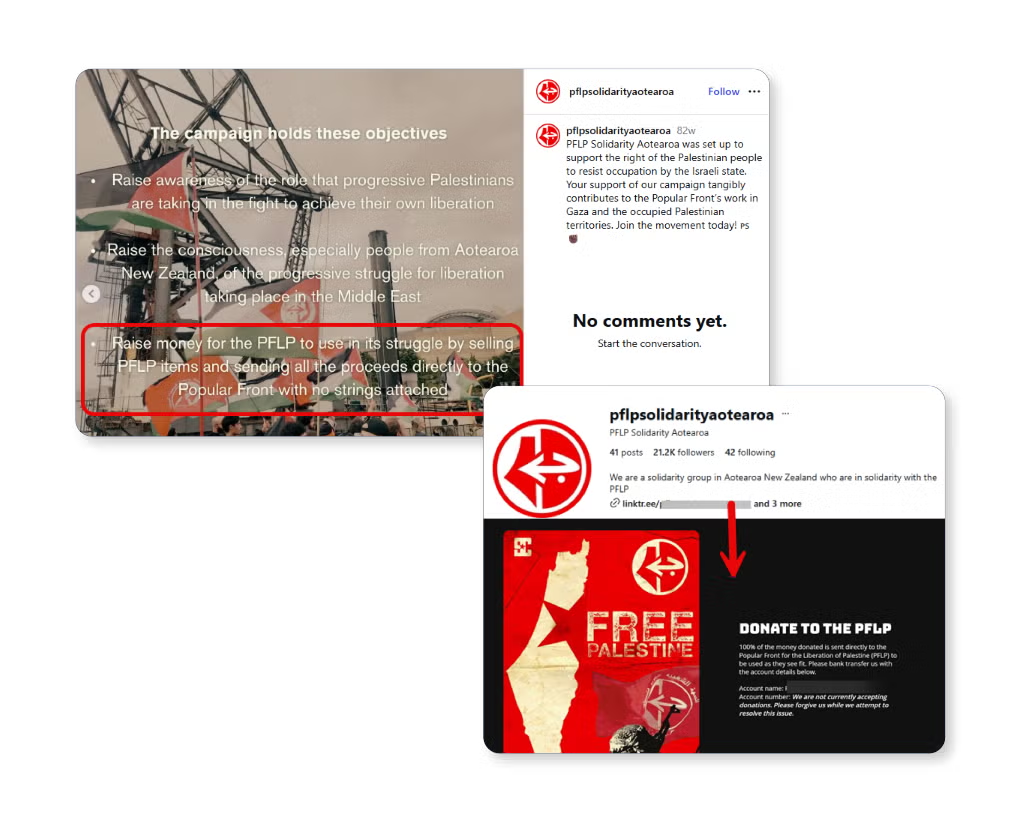

- Accounts directly or indirectly linked to U.S.-designated Foreign Terrorist Organizations, Special Designated Terrorist Groups and other similar organizations, including ISIS, Al-Qaida, and the Popular Front for the Liberation of Palestine, retained over 340,000 followers on the platform. (See appendix)

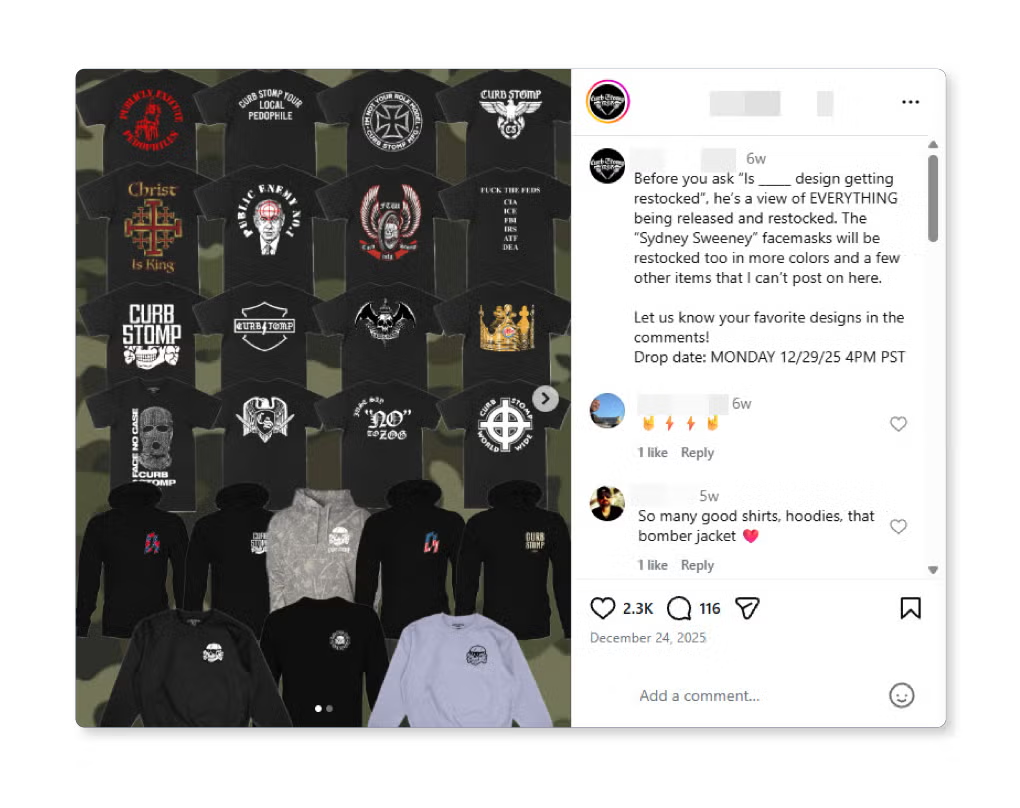

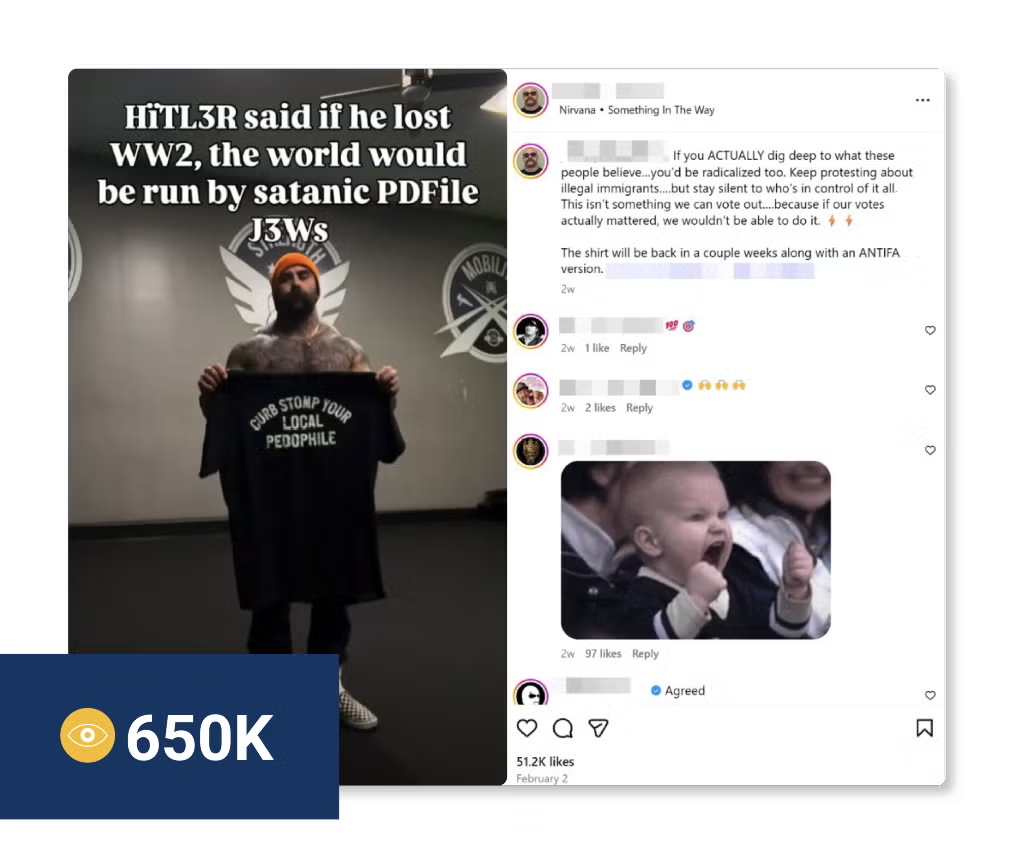

- A single extremist merchandise vendor selling apparel bearing Nazi symbols — including Sonnenrads, Totenkopfs, and SS bolts — accumulated more than 3.2 million views. (See appendix)

- Llama: ADL’s AI Index found that Meta’s Llama underperformed many of its peers in failing to rebut antisemitic and extremist content, suggesting potential gaps in the Company’s AI safety controls and governance. [6]

Disproportionate Impact on At-Risk Groups

- Jewish users: ADL data shows that Jewish adults were more likely to be harassed online for their religion (34% of those harassed compared to 18% of non-Jews) and 41% changed their online behavior to avoid being recognized as Jewish. Nearly two-thirds (63%) felt less safe than they did last year. [7]

- Other At-Risk groups: The harms also appear broader across the platform. 76% of women and 78% of people of color report being targeted by increased harmful content following the policy changes. Also, 75% of LGBTQ respondents report increased exposure to harmful content following the policy changes. [8] GLAAD’s 2025 Social Media Safety Index likewise found that Meta’s recent policy revisions introduced explicit allowances for certain forms of harmful speech targeting LGBTQ+ individuals and reduced prior safeguards. [9] Combined, these statistics suggest that the weakening of safeguards has had wider effects across vulnerable communities. [10]

Meta’s Own Independent Oversight Board Continues to Raise Concerns

Meta’s own independent Oversight Board continues to raise concerns about the Company’s January 7, 2025 changes to its fact-checking and Hateful Conduct policies. In April 2025, the Oversight Board stated that these changes were announced hastily and without confirmation as to whether Meta performed the human rights due diligence that it has committed to under the UN Guiding Principles. The Oversight Board specifically called on Meta to assess and publicly report on the human rights impacts of those updates, including potential harms to vulnerable groups. The Oversight Board also questioned Meta’s replacement of third-party fact-checking in the United States with “Community Notes,” recommending that the Company test whether the new system is as effective as professional fact-checking in slowing harmful misinformation. [11] [12]

Since then, the Oversight Board has gone further. In a March 26, 2026 Policy Advisory Opinion on Community Notes’ global expansion, the Oversight Board identified additional human rights risks associated with rolling out the program internationally, including risks to repressive environments, minority communities, crisis-affected countries, and environments with active disinformation networks. [13] The Oversight Board found “community notes could, in certain contexts, privilege dominant political, ethnic or linguistic groups, and marginalize minority groups” and is poorly suited to crisis and conflict situations due to publication delays. [13] This sustained pattern of formal concern from Meta’s own independent oversight body reinforces the conclusion that the Company has not adequately assessed or mitigated the risks associated with its January 7, 2025 policy changes.

Expanding Litigation and Regulatory Exposure Reinforces the Business Risk

Meta is already facing a growing wave of litigation tied to platform safety and alleged societal harms [14]. In its 2025 Annual Report on Form 10-K filed with the US Securities and Exchange Commission, Meta stated that the number and potential significance of its litigation and investigations have increased, that it faces risks from matters involving content, content moderation, and societal harms, and that damages or penalties sought across its legal proceedings could amount to “hundreds of billions of dollars and, as a result, could be material to the financial condition of the [C]ompany.” [15] Recent cases underscore this risk:

- In March 2026, a New Mexico jury found Meta violated state law and ordered it to pay $375 million in civil penalties in a case alleging that the company misled users about the safety of Facebook, Instagram, and WhatsApp and enabled child sexual exploitation on those platforms. [16]

- Also in March 2026, a Los Angeles jury found Meta and Google negligent for designing social media platforms harmful to young people. The verdict, which turned on product-design and failure-to-warn theories, is serving as a bellwether for thousands of similar cases consolidated in California state court. [17]

- In April 2026, the Massachusetts Supreme Judicial Court allowed the state’s case against Instagram to proceed, holding that Section 230 of the Communications Decency Act of 1996 does not bar claims targeting Meta’s own conduct in designing a platform that allegedly capitalizes on children’s vulnerabilities and misleading consumers about safety. 33 other states are pursuing similar cases against Meta in federal court. [18]

In October 2025, the European Commission issued preliminary findings that Meta breached the Digital Services Act by failing to provide adequate transparency regarding content moderation and researcher data access, with potential fines of up to 6% of Meta’s global annual revenue. [19] [20]

Taken together, these developments clearly underscore that weaknesses in Meta’s risk governance and content moderation can translate into material legal, financial, and operational exposure for the Company and the destruction of shareholder value. Although these matters arise in different contexts, they reflect a common theory: Meta faces growing liability when its systems, product design choices, and safety controls are alleged to have contributed to foreseeable harm. We believe this broader pattern reinforces the need for greater transparency and accountability regarding how the Company identifies, assesses, and mitigates such risks.

Risks to Meta’s Revenue Model and Shareholder Value

Meta’s inadequate response to hate speech presents material risks to its revenue model and long-term shareholder value. Because Meta’s business depends overwhelmingly on advertising, failures in content-risk management can affect revenue through two reinforcing channels: advertiser demand and user engagement. Heightened brand-safety concerns may cause advertisers to reduce spending, tighten placement restrictions, or demand lower pricing, while declining user trust and engagement can reduce the volume, quality, and effectiveness of Meta’s advertising inventory.

- Advertiser Demand and Pricing (Demand-Side Risk): Advertisers remain sensitive to brand safety, making Meta’s core revenue engine vulnerable to content-governance failures. Given that advertising accounted for approximately 97% of Meta’s total revenue ($200.97 billion in 2025), any policy rollback that results in increased harmful content (including Meta’s most recent policy rollback) such as the documented fivefold increase in antisemitic harassment — creates direct financial exposure. [21] [22]

- User Engagement and Advertising Supply (Supply-Side Risk): Weakened moderation policies pose a “supply-side” threat by degrading the platform experience for high-value user demographics. Because Meta’s business model is predicated on the monetization of user attention through targeted impressions, any erosion of trust can drive users toward safer, more moderated competitors. Reductions in daily active users, time spent, or engagement quality can directly impair the volume and effectiveness of Meta’s advertising inventory. As user trust declines, the core monetization engine can be materially weakened, increasing churn risk and reducing the long-term value of the Company’s primary asset: its advertising supply.

- Executive Statements Underscore the Risk. Internal recognition of these risks by Meta’s leadership underscores their materiality to shareholder value. Meta’s Chief Marketing Officer, Alex Schultz, has explicitly noted that advertiser concerns are centered heavily on brand-safety issues related to hate speech and adjacent content risks. [23] Furthermore, Meta’s Chief Global Affairs Officer, Nicola Mendelsohn, has publicly committed the Company to a continued focus on “brand safety and suitability,” suggesting an internal acknowledgment that these content-governance issues pose ongoing threats to the business. [24] When harmful content increases, the probability rises that major advertisers will reallocate or discount spend to avoid reputational contagion, a trend that directly impacts Meta’s long-term revenue growth and pricing power.

Limited Engagement Has Not Resolved the Underlying Concerns

Since June 2025, JLens and ADL have sought to engage constructively with Meta regarding the concerns underlying this proposal. While some engagement occurred, in our view, Meta did not provide substantive responses to a number of key questions or commit to meaningful policy changes, including creating a clear framework to mitigate the risks to users and shareholders. It is clear to us that this record of limited engagement supports the need for the independent report requested by Proposal 8.

Conclusion

Proposal 8 requests that Meta provide a detailed public report evaluating how effectively it addresses hate content, particularly antisemitism, across its platforms. Per Proposal 8, the report should cover content moderation practices, enforcement, transparency, and the use of independent expertise, enabling investors to assess whether the Company is managing these risks effectively.

As set forth in this memo, over the past year, antisemitism and online harassment on Meta’s platforms have continued, regulatory scrutiny has intensified, and Meta’s own Oversight Board has raised concerns about the Company’s approach. These developments underscore that the risks at issue are not only societal concerns, but material business risks affecting Meta’s brand, user growth, and exposure to regulatory and legal costs.

As a shareholder of Meta, you have a direct interest in how the Company manages these risks. By voting FOR Proposal 8, shareholders are requesting the transparency and accountability that we believe are necessary to evaluate whether Meta is effectively managing these risks and protecting long-term shareholder value.

Stand Against Hate.

Protect Shareholder Value.

Support Accountability and Transparent Reporting.

Vote FOR Proposal 8

For more information, please contact Dani Nurick, JLens Director of Advocacy, at

Appendix:

The following screenshots document a sampling of content identified by ADL researchers on Instagram between October 2025 and March 2026, as detailed in “How Meta’s Content Moderation Practices Risk Turning Instagram into a Hub for Hate.” [5] All content shown was publicly accessible on the platform at the time of capture. These examples are illustrative, not exhaustive, and were drawn from a broader set of accounts and posts that collectively reached millions of users. Where ADL reported content through standard user reporting channels, Instagram removed only 7% of flagged material.

Antisemitic Content:

[Groyper image] AI-generated reel from the Groyper network depicting white supremacist Nick Fuentes attacking Orthodox Jewish men — 35.6K likes as of capture. Representative of AI-generated antisemitic content circulating across the 105 Groyper-affiliated accounts identified by ADL researchers, which collectively amassed more than 1.4 million followers on Instagram. Captured Feb. 18, 2026. (Source: Instagram/Screenshot)

Content Promoting US Government Designated Foreign Terrorist Organizations:

[PFLP solidarity account image] Instagram account @pflpsolidarityaotearoa (21.2K followers as of capture) openly soliciting donations for the Popular Front for the Liberation of Palestine (PFLP), a U.S.-designated Foreign Terrorist Organization. The account’s bio directs users to a LinkTree with donation instructions, and posts explicitly state that proceeds go “directly to the Popular Front with no strings attached.” Representative of accounts directly or indirectly linked to U.S.-designated Foreign Terrorist Organizations — including ISIS, Al-Qaida, and the PFLP — that collectively retained more than 340,000 followers on Instagram. Captured Feb. 20, 2026. (Source: Instagram/Screenshot)

Extremist Merchandise for Sale:

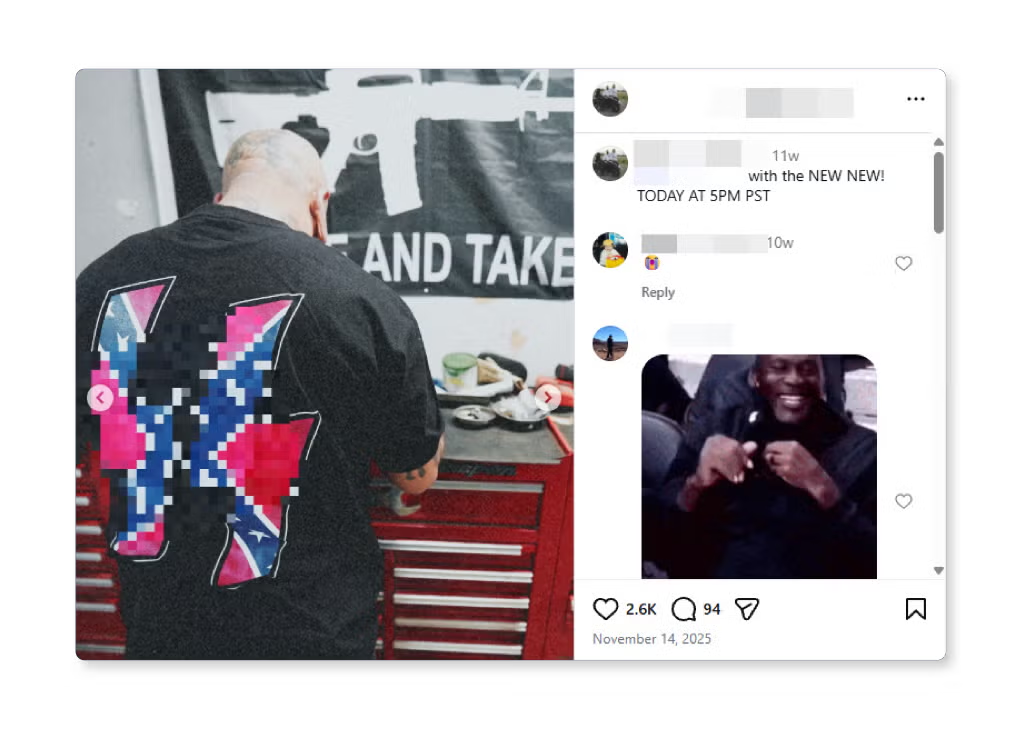

[Curb Stomp merchandise drop images] Instagram posts by Curb Stomp MFG promoting a restock of extremist-branded apparel, including hoodies, crewnecks, shirts, and facemasks. The account operates as a commercial storefront for merchandise featuring white supremacist and neo-Nazi iconography. Captured Feb. 23, 2026. (Source: Instagram/Screenshot)

White Supremacist Apparel:

[SS bolts / Confederate flag shirt image] Instagram post from Curb Stomp MFG promoting apparel featuring obscured SS bolts overlaid on a Confederate flag — 2.6K likes as of capture. ADL researchers documented the vendor selling merchandise bearing Nazi symbols including Sonnenrads, Totenkopfs, and SS bolts. Captured Feb. 19, 2026. (Source: Instagram/Screenshot)

Holocaust Denial:

[Curb Stomp Holocaust denial image] Instagram reel by the owner of Curb Stomp MFG promoting the antisemitic claim that Hitler’s defeat enabled Jewish control of the world — 51.2K likes, 650K views as of capture. Content from Curb Stomp MFG’s and the owner’s accounts content have accumulated more than 3.2 million views on the platform. Captured March 3, 2026. (Source: Instagram/Screenshot)

The examples above are drawn from ADL’s full report, “How Meta’s Content Moderation Practices Risk Turning Instagram into a Hub for Hate” (2026), which contains the complete methodology, findings, and additional documented examples. Available at: https://www.adl.org/resources/report/how-metas-content-moderation-practices-risk-turning-instagram-hub-hate

Endnotes:

- “More Speech and Fewer Mistakes.” Meta, January 7, 2025, https://about.fb.com/news/2025/01/meta-more-speech-fewer-mistakes/

- Meta Platforms, Inc. Form 8-K (Current Report). U.S. Securities and Exchange Commission, July 30, 2025, https://www.sec.gov/ix?doc=/Archives/edgar/data/0001326801/000132680125000090/meta-20250528.htm

- “Shareholder Proposal Demanding Accountability for Antisemitism and Hate Across Meta’s Platforms Ranks as Top-Performing Human Rights Concern.” JLens Network / Anti-Defamation League, June 10, 2025, https://www.jlensnetwork.org/shareholder-proposal-demanding-accountability-for-antisemitism-and-hate-across-metas-platforms-ranks-as-top-performing-human-rights-concern/

- “Meta’s Hate Policy Rollback Linked to Increased Antisemitism,” Anti‑Defamation League, May 8, 2025, https://www.adl.org/resources/article/metas-hate-policy-rollback-linked-increased-antisemiti

- “How Meta’s Content Moderation Practices Risk Turning Instagram into a Hub for Hate.” Anti-Defamation League, 2026, https://www.adl.org/resources/report/how-metas-content-moderation-practices-risk-turning-instagram-hub-hate

- “ADL AI Index,” Anti-Defamation League, January 28, 2026, https://www.adl.org/adl-ai-index

- Anti-Defamation League (ADL), Online Hate and Harassment: The American Experience 2024, June 11, 2024. https://www.adl.org/resources/report/online-hate-and-harassment-american-experience-2024

- https://makemetasafe.org/wp-content/uploads/sites/401/UV-MetaReport_v3-Full.pdf UltraViolet, “Meta Report: Platforming Hate and Disinformation — How Meta Fails to Protect Users” (Version 3, full report PDF)“

- 2025 Social Media Safety Index,” GLAAD, https://glaad.org/smsi/2025/meta-platforms/.

- “MORE HATE, FEWER PROTECTIONS. Harmful Content on Meta’s Platforms in the Wake of Policy Rollbacks, According to Users,” UltraViolet, GLAAD, All Out (Make Meta Safe Campaign), May 29, 2025, https://makemetasafe.org/wp-content/uploads/sites/401/UV-MetaReport_v3-Full.pdf

- Oversight Board, “Wide-Ranging Decisions Protect Speech and Address Harms,” Oversight Board (Apr. 23, 2025), https://www.oversightboard.com/news/wide-ranging-decisions-protect-speech-and-address-harms

- Meta Platforms, Inc., “Community Notes,” Meta Technologies, available at: https://transparency.meta.com/features/community-notes.

- Oversight Board, “Assessing Meta’s Plans to Expand Community Notes,” Oversight Board (March 26, 2026), https://www.oversightboard.com/decision/pao-007g5zuv/

- Dominic Patten, “Meta & Google Get Less Than A Financial Slap On The Wrist As L.A. Social Media Trial Jury Orders Tech Giants To Pay Out Just $6M In Total Damages” Deadline, March 25, 2026, https://deadline.com/2026/03/meta-google-liable-social-media-trial-los-angeles-1236765620/

- Meta Platforms, Inc. Annual Report on Form 10-K for the Fiscal Year Ended December 31, 2025. U.S. Securities and Exchange Commission, January 28, 2026, https://www.sec.gov/Archives/edgar/data/1326801/000162828026003942/meta-20251231.htm

- Diana Novak Jones, “Meta ordered to pay $375 million in New Mexico trial over child exploitation, user safety claims” Reuters, March 24, 2026, last updated March 27, 2026, https://www.reuters.com/sustainability/boards-policy-regulation/jury-orders-meta-pay-375-mln-new-mexico-lawsuit-over-child-sexual-exploitation-2026-03-24/

- “What did jury decide in social media case against Meta and Google?” Reuters, March 25, 2026, last updated March 26, 2026, https://www.reuters.com/legal/litigation/what-did-jury-decide-social-media-case-against-meta-google-2026-03-25/

- Commonwealth vs. Meta Platforms, Inc., & another, No. SJC-13747, Massachusetts Supreme Judicial Court, 10 Apr. 2026, www.mass.gov/doc/commonwealth-v-meta-platforms-inc-sjc-m13747/download.

- Katrina Bishop, “EU says TikTok and Meta broke transparency rules under landmark tech law,” CNBC, October 24, 2025, https://www.cnbc.com/2025/10/24/eu-says-tiktok-and-meta-broke-transparency-rules-under-tech-law.html.

- Robert Booth and Lisa O’Carroll, “Meta found in breach of EU law over ‘ineffective’ complaints system for flagging illegal content,” The Guardian, October 24, 2025, https://www.theguardian.com/technology/2025/oct/24/instagram-facebook-breach-eu-law-content-flagging.

- Mark Sweney, “Advertiser Exodus from X: Survey Shows 2025 Cuts Amid Concerns Over Content and Trust,” The Guardian, September 5, 2024, https://www.theguardian.com/media/article/2024/sep/05/advertiser-exodus-x-survey-2025-elon-musk.

- Mike Masnick, “Advertisers Aren’t Thrilled With Zuckerberg’s Embrace of Hate Speech.” Techdirt, January 29, 2025, https://www.techdirt.com/2025/01/29/advertisers-arent-thrilled-with-zuckerbergs-embrace-of-hate-speech/

- Alex Schultz. “Q4 2023 Earnings Call.” Meta Platforms, Inc., February 1, 2024, https://investor.fb.com/investor-events/event-details/2024/Meta-Fourth-Quarter-2023-Results-Conference-Call/default.aspx

- Meta’s Chief Global Affairs Officer Nicola Mendelsohn, statement on brand safety and suitability. As cited in Lara O’Reilly, “Advertisers say Meta’s content-moderation changes make them uneasy. They won’t stop spending.” Business Insider, January 8, 2025, https://www.businessinsider.com/meta-content-moderation-update-impact-ad-business-brand-safety-2025-1.

About the Anti-Defamation League

ADL is the leading anti-hate organization in the world. Founded in 1913, its timeless mission is “to stop the defamation of the Jewish people and to secure justice and fair treatment to all.” Today, ADL continues to fight all forms of antisemitism and bias, using innovation and partnerships to drive impact. A global leader in combating antisemitism, countering extremism and battling bigotry wherever and whenever it happens, ADL works to protect democracy and ensure a just and inclusive society for all.

About JLens

Founded in 2012, JLens is a 501(c)(3) nonprofit and Registered Investment Advisor that empowers investors to align their capital with Jewish values and advocates for Jewish communal priorities in the corporate arena. JLens’ Jewish Investor Network is composed of over 35 Jewish institutions, representing $12 billion in communal capital. In 2022, JLens established an affiliation with ADL (Anti-Defamation League), the leading anti-hate organization in the world. JLens operates as a nonprofit membership corporation, with ADL serving as its sole member. More at www.jlensnetwork.org.

THIS IS NOT A PROXY SOLICITATION AND NO PROXY CARDS WILL BE ACCEPTED

Please execute and return your proxy card according to Meta’s instructions.